Scaling AI Use Cases: Playbook for Business Growth

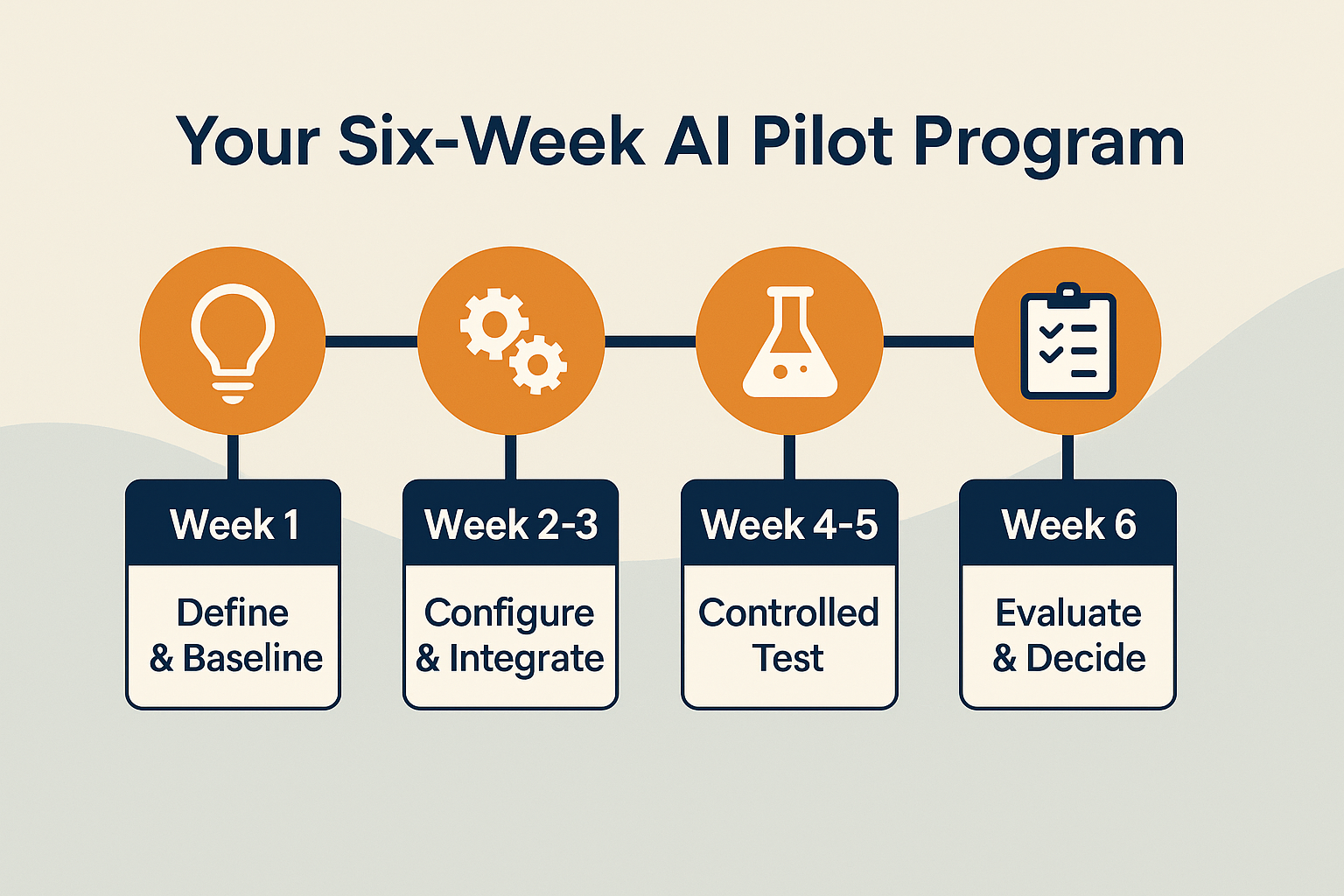

You’ve successfully piloted an AI solution, measured its ROI, and perhaps even operationalized it. That’s a huge win! However, as IBM wisely observes, pilots are fun, but scaling is where real value is created. Many businesses stop at isolated experiments, missing out on the exponential benefits that come from systematically expanding AI’s footprint across their organization.

The AI Maturity Ladder: Replicate, Integrate, Transform

Stage 1: Replicate – Reuse, Refine, and Reapply

With one solid success in production, it’s time to leverage what you’ve learned.

Open Your Use-Case Backlog: Go back to the backlog of potential AI use cases you likely created during your initial Discovery phase. Now, prioritize two or three more high-value use cases that share similar characteristics or data needs as your successful pilot. Reference valuable resources like OpenAI’s 2025 checklist on identifying and prioritizing new use cases for structured guidance.

Rapid Readiness Checks: Because you’ve been through the process once, you can execute readiness checks (data availability, stakeholder buy-in, KPI definition) much more quickly. Your team already understands the drill.

Iterate on Feedback: Apply lessons learned from your first pilot’s operationalization and measurement phases. What worked well? What could be smoother? This continuous feedback loop accelerates replication.

Stage 2: Integrate – Connecting Systems End-to-EndAdd Your Heading Text Here

This stage moves beyond isolated AI tools to connecting them deeply with your core business systems and processes.

Beyond the “Sandbox”: While initial pilots might use AI as a standalone tool, integration means connecting it directly to your CRM, ERP, marketing automation platforms, or internal databases.

Automate Workflows: Look for opportunities to automate entire workflow segments where AI can seamlessly hand off tasks to other systems or trigger subsequent actions. For example, AI-qualified leads directly pushed into your sales CRM, or AI-generated reports automatically distributed to stakeholders.

Data Flow Optimization: Ensure smooth, secure, and automated data flow between your AI models and your existing enterprise data infrastructure. This is critical for reliable, high-volume AI operations.

Stage 3: Transform – Building an AI Center of Excellence

This is the ultimate goal of scaling AI use cases: establishing an internal capability that fosters long-term AI innovation and adoption. Your role as an external advisor will gradually shift from a hands-on builder to a strategic guide.

Form an Internal AI Center of Excellence (CoE): This doesn’t have to be a large, dedicated department. For an SMB, it might be a cross-functional group of passionate internal champions, perhaps the “AI champions” you trained earlier. Their role is to own experimentation, evaluate new AI technologies, and manage change.

Establish a Monthly “AI Council” Meeting: This is a crucial change-management tactic. Gather cross-functional leaders from different departments to share wins, discuss new opportunities, raise risks, and collectively keep the AI momentum alive. This ensures alignment and broad buy-in.

Keeping the Momentum Alive: Essential Change Management Tactics

Successful enterprise-wide AI rollout is as much about people and culture as it is about technology.

Internal Newsletter of Wins: Celebrate every AI success, big or small. An internal newsletter or dedicated communication channel highlighting how AI is saving time, increasing revenue, or improving employee experience builds enthusiasm and showcases tangible benefits.

Budget Line for Continuous Training: AI evolves rapidly. Dedicate a consistent budget for ongoing AI upskilling and specialized training to ensure your team’s skills remain sharp and they can leverage new AI capabilities as they emerge.

Executive Sponsorship: Ensure leadership remains visibly committed to AI initiatives. Their support is vital for overcoming resistance and driving adoption.

By systematically following this maturity ladder and integrating robust change management, you won’t just have an isolated AI pilot; you’ll have an AI center of excellence driving continuous innovation and value across your entire business.

Braveheart: Your Partner in Scaling AI Across Your Enterprise

Moving beyond pilot success to a full enterprise-wide AI rollout can be complex, requiring expertise in strategic planning, technical integration, and organizational change management. At Braveheart, we specialize in helping small and mid-sized businesses expand AI adoption effectively and sustainably.

We guide you through replicating proven patterns, seamlessly integrating AI into your core systems, and establishing an internal AI center of excellence that empowers your team for long-term success. Our structured approach, drawing on best practices and tools like our “Use-Case Backlog Template,” ensures your AI investments truly transform your business, delivering sustained value. Don’t let your AI potential be limited to a single project.

Ready to transform your business by scaling AI use cases?

Contact Braveheart today for a free consultation to discuss how we can help you build your internal AI capabilities and drive widespread adoption.