4 Reasons to Keep Advertising (Even When Others Cut Back)

Why Businesses That Keep Advertising Win — Even When Others Pull Back It sounds counterintuitive: when revenue slows or uncertainty...

Read MoreHarnessing the Power of AI for Small Business Marketing

Harnessing the Power of AI for Small Business Marketing https://www.youtube.com/watch?v=deOL5KUtkjcThe marketing world is rapidly evolving with the advent of AI,...

Read MoreHow Many Social Media Platforms Should You Focus On?

How Many Social Media Platforms Should Your Business Use in 2026? The short answer: two to three platforms, done well,...

Read MoreMastering SEO in 2026: A Comprehensive Guide to the Four Pillars of Effective SEO Strategy

Mastering SEO in 2026: A Comprehensive Guide to the Four Pillars of Effective SEO Strategy https://youtu.be/6pFHmg7jlbQ SEO, or search engine...

Read MoreLocal SEO AI Search

Directories, Yelp, and the Future of Local SEO in AI Search: https://www.youtube.com/watch?v=6pFHmg7jlbQArtificial intelligence is already changing the way search engines...

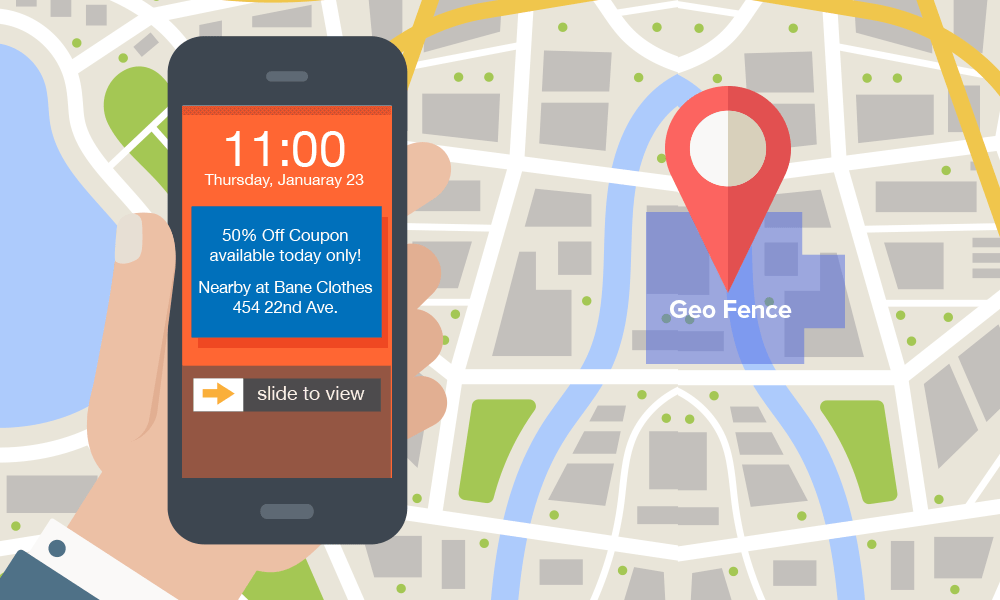

Read MoreWhat Is Geofencing?

What is Geofencing? Geofencing marketing is location-based ads where a user’s location is recorded via the internet, and advertisements are...

Read More